When everything is news, nothing is news

Thoughtlessly repeating PR talking points and sharing guesswork as rumor is creating a trust problem.

The death of SEO thanks to Google monetizing others' work through AI scraping theft has led to wholly new problems surrounding tech reporting. While some genuinely amazing independent blogs and websites have appeared with a commitment to providing something unique and tailored, others have doubled down on flooding the web with anything and everything.

You can see the results. Those websites that respect the reader's time and have something to say get a dedicated following that supports them financially. The others become more and more hated as their clickbait tactics piss off their readers, and the flood of articles results in no one learning anything new.

I'm not here to point fingers or call out names. I'm aware I work for AppleInsider and it surely isn't perfect. Though, I can say without a doubt that we do put a lot of effort into ensuring we post each story with plenty of context and thought. You'll struggle to find a simple "this happened" with three paragraphs and a link on our website.

I'm thinking about this today because some headlines came across my feed that were blatantly just PR talking points. I'm not sure if the author simply believed the PR as fact or if they didn't bother taking the time to frame it appropriately.

For example, OpenAI put out a press release for GPT-Realtime-2 that says it "keeps the conversation moving while it reasons through a request." First of all, AI models don't reason, they don't think, and they don't understand anything. They're not human.

In spite of OpenAI using human actions to describe what its prediction engine was doing, several publications went with the headline using the same language. It's able to "reason" through a request, presented as fact. End of story.

When publications simply repeat the PR talking points of a company, and yes, I'm including Apple in that sentiment, they're no longer doing tech journalism. They're acting as an extended PR wing for the company in question, pushing the agenda and vocabulary agreed upon over dozens of internal meetings.

When I call something by Apple's marketing name, it is to give it a label so I can describe it. Ceramic Shield is a thing that Apple calls better scratch and impact-resistant cover glass for an iPhone. Do I want to explain that each time? No, I just say Ceramic Shield because that's what it is called and is provably what it does.

That's not what is happening with PR like this from ChatGPT. It isn't simplifying an explanation with a company-branded term. Instead, it is applying a human label to a computer process as if it were just an accepted fact that that is how you describe that process. It humanizes AI and reinforces the idea that it could replace us.

It's not human, it doesn't think, it isn't replacing us.

I'd love to give a million other examples, but this is the one in front of me today. Using these same thought processes, you can spot these kinds of things in the future and make your own determinations.

So, if not reasoning, what is this AI tool doing?

Here's OpenAI's PR:

GPT-Realtime-2 is built for live voice interactions where the model keeps the conversation moving while it reasons through a request, calls tools, handles corrections or interruptions, and responds in a way that fits the moment.

Here's what it means in actual terms:

GPT-Realtime-2 is a model designed to promote continued interaction from the user even when a request is taking longer than desired. The tool processes the request and sends data to the associated agentic tools, all while maintaining interactions with the user to ensure they don't quit the program.

Notice that the first reads as if it were a person trained for a job, and the second is a computer program going through its tasks. We don't have to simply regurgitate their language, and if and when we do, we use quotes.

OpenAI says GPT-Realtime-2 can "reason" through a request.

It's not difficult to ensure we're communicating with the readers who are saying what and why. OpenAI wants to convey it has a real thinking AI that operates like a human, but it doesn't. We shouldn't feed into that.

This kind of acceptance around how grifters discuss AI is what allowed the bubble to form in the first place. Language matters, and people were convinced that this form of advanced autocomplete is an actual thinking computer.

AI is a powerful toolset and can help accelerate work tasks when applied properly. It is not thinking, reasoning, or researching. It's performing commands issued by a computer program controlled by a user.

Media literacy is more important than ever, even when we're talking about tech journalism. Or maybe, especially when talking about tech journalism. So much of our future will be dictated by how technology is implemented, it's important that we know how to discuss it with the correct language and understanding, not marketing.

I've had similar thoughts recently about rumors versus gossip in reporting and the low quality rumors that have been shared recently.

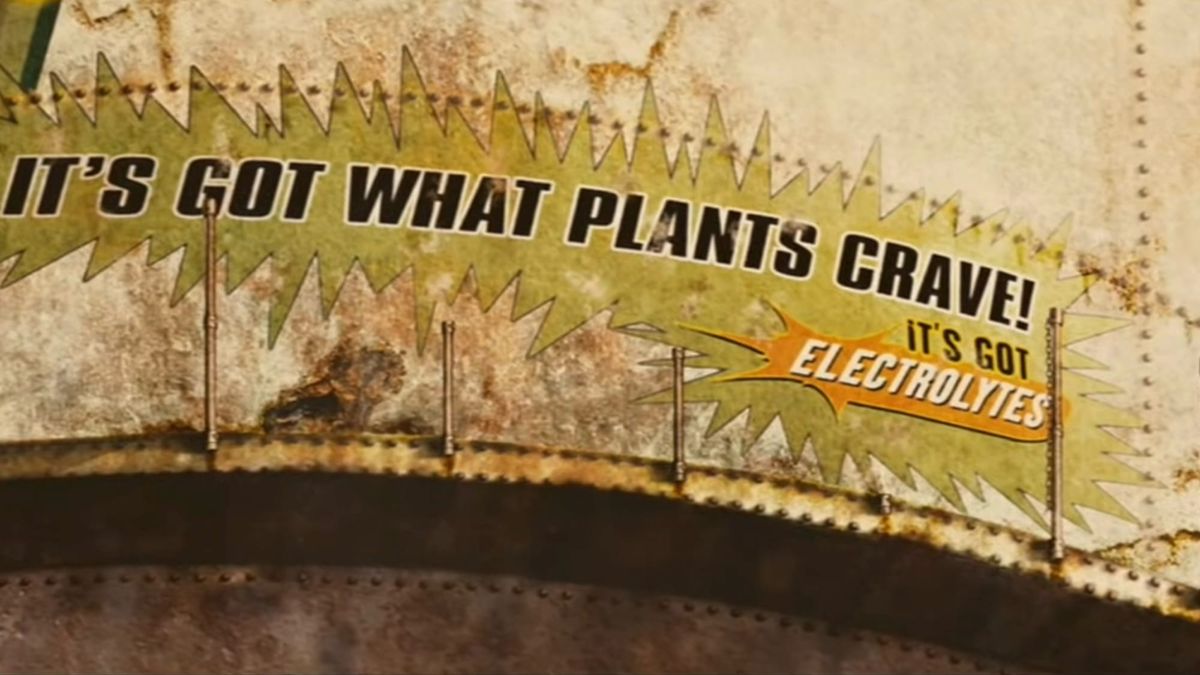

If you just accept that Brawndo has electrolytes without understanding why, you too could end up living in a world like Idiocracy.